Data Mesh Meets Universal Authorization

Data mesh has attracted intense attention because of its promise to deliver faster analytics in an agile and decentralized manner. It puts the responsibility for data quality and curation on data producers and owners within business “domains,” who understand the data the best. They package the data for consumption as a “product.” The two major goals of data mesh are: 1. Remove bottlenecks arising from centralized data engineering teams, and 2. Provide authorized data consumers with read-only self-service access to curated data products.

Data mesh is a concept, and not an implementation methodology. It does not prescribe any technologies or standards to implement it. A data mesh may involve domain-level data warehouses or data lakes, but those are orthogonal to its principles.

For a data mesh to deliver its promise, resolve the following issues:

- Data discovery mechanism

- Data quality and trustworthiness

- Standardization of common infrastructure and reuse of assets

- Interoperability of domains

- Self service architecture

- Observability, governance, and security

Due to the lack of standard reference architectures, various organizations are developing custom solutions to enable data mesh. This paper focuses on the need to ensure a cohesive and scalable data access governance and authorization mechanism. It proposes a unified governance approach to standardize and simplify data access governance based on a consistent set of policies. The domain-specific policies link the data consumer identities and their roles to make cross-domain data available to every authorized user.

Key findings

Data value chain leaders responsible for evaluating and implementing data mesh should:

- Deploy a collaborative governance platform. Engage all data stakeholders, such that the business, infosec, and data privacy teams work with the data and IT teams to deliver data to business without compromising data security mandates or data privacy regulations.

- Design a common data access governance layer. Ensure data consumers have consistent access to common data products in different domains through centralized policy management. During the data mesh planning stage, perform proof of concepts of products that provide data governance capabilities. It should not be an afterthought.

- Implement the universal authorization layer. Permit consumers to search and analyze domain data without performance and scale bottlenecks. The universal authorization layer is typically a best of breed product deployed in a modular and composable architecture, capable of supporting multiple data storage technologies in a hybrid multi-cloud environment.

Before delving into the security and governance aspects, let’s take a deeper dive into the concept of a data mesh.

Data mesh: a brief primer

Several excellent papers have been written on data mesh by its originator, Zhamak Deghani and Thoughtworks. This section only provides a brief high-level overview of its concept and principles.

Data professionals have been on a quest to provide business users with a common representation of data through various storage architectures that integrate internal and external data from different sources. This has taken the shape of enterprise data warehouses, data lakes, lake houses, data virtualization, data sharing, and data fabrics.

These approaches rely on varying levels of data integration technologies. They also rely on centralized data engineering teams which build the data pipelines to ingest, transform, and curate data. One issue with this approach is that the data engineering team can become a bottleneck. This model leads to a disconnect between data producers and data consumers. Data producers often do not know how their data is being used, while the data consumers are unaware of the data sources. They both rely on the data engineer to be the bridge.

Data mesh has the potential to alleviate the problem of replicating data ad nauseam, and creating multiple silos. A decentralized architecture to improve agility and time to insights is not a new concept. Some of the previous approaches have included data marts and data virtualization techniques. The data mesh approach introduces a set of principles that put higher accountability and ownership of data on the domains where data is produced, and de-emphasizes the current trend of creating a centralized analytical data store.

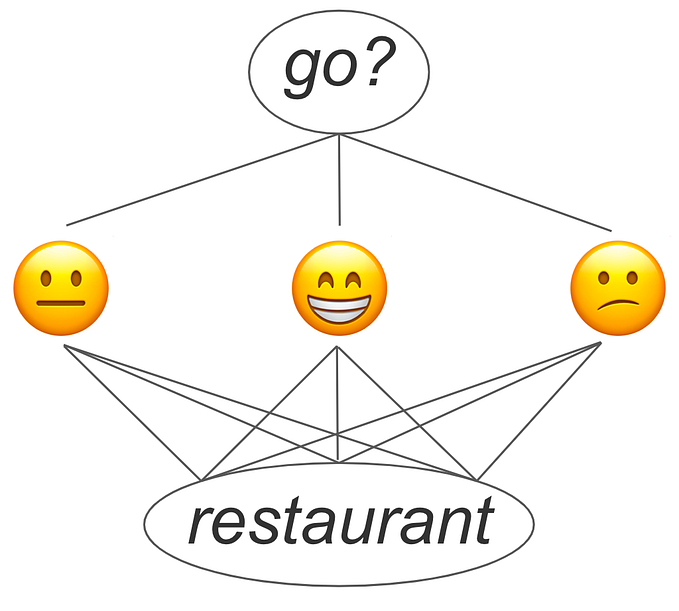

Figure 1 shows the high-level overview of data mesh.

Figure 1. The four principles of data mesh.

Data mesh principles are designed to decrease the impedance mismatch between data producers and consumers. If implemented well, data mesh ensures that the data consumers don’t have to guess whether they can trust data or wrangle it, as the data producers are accountable for its quality and accessibility.

Domain driven design has been applied to software development for decades for building software applications. Data mesh applies the same principles to building data-intensive applications. Each domain is responsible for delivering data as a product. The domains ensure its quality and accessibility.

Data products comprise data elements, associated technical schema metadata, business glossary terms, data pipeline code used to generate it, documentation, usage examples, and even notebooks. Data product documentation shows the level of granularity of datasets, e.g. raw data versus aggregated, schema, and mappings and transformations. Finally, data products have SQL and programmatic notebook interfaces. Data producers across domains collaborate to standardize naming conventions for common data elements.

As with any new concept, data mesh comes with some unknowns. It is a top-down initiative that needs an envisioned data culture across the organization and stakeholder buy-in. Its challenges start with foundational questions: what is it, and why is it better from the past initiatives? All the parties involved — organizations’ stakeholders and data infrastructure vendors — need to align. For example, business stakeholders need to clearly define what is the definition of a domain within their organization. This is an iterative process that goes through a phase of refinement. Similarly, IT stakeholders need to ensure that the software components making up the mesh will have required APIs.

Domain-level analytics work great until data consumers need cross-domain data to build dashboards and reports. This necessitates a well-designed data access layer.

Data access layer

Interoperability of data is one of the biggest challenges in meeting data mesh’s goal of sharing business context in a self-service manner to maximize its usability. Let’s take an example of a retail organization that has customers’ orders data in the sales domain. A business analyst uses the data to run customer journey analytics models. However, to perform customer churn analytics, the analyst needs to tap into the customer success domain that tracks support tickets, surveys, and social media posts, etc.

Data mesh proposes a combination of distributed stewardship with logically and physically interconnected data. This interconnected data layer should provide a lean, and cost-efficient analytical environment, with the following benefits:

- Share common standards

- Reuse common resources

- Reduced integration overhead

- Develop deep skills in core technologies rather than every department having its own stack.

However, the challenge is in building a watertight security architecture for data mesh. This is how Thoughtworks describes the fourth principle of “federated computational governance”:

The last principle addresses the question around, “How do I still assure that these different data products are interoperable, are secure, respecting privacy, now in a decentralized fashion?”

…all of those policies that need to be respected by these data products, such as privacy, such as confidentiality, can we encode these policies as computational, executable units and then code them in everyday products so that we get automation, we get governance through automation?

The data mesh approach to help data producers quickly deliver high-quality data to the business needs to be augmented by a universal authorization layer that knocks down the data silos and automatically makes the necessary data available to the consumers.

Decentralized domain data stores need a centralized data access and governance layer to make data mesh work at scale

Secure and authorized data access has always been a critical requirement of every application. Data mesh is not an exception. To access data from the sources of truth, the following initiatives are a must:

- Data discovery

Domain data has to be discoverable and accessible. Domains may have their own individual data catalogs that link business metadata to the domain’s technical metadata. In the data mesh parlance, the catalog also includes data products.

In addition, a catalog of catalogs is needed to provide a cross-functional semantic layer for the common and shareable data products from different domains. This uber catalog has an additional attribute — domain id. In summary, a data mesh needs a federated data catalog architecture.

Data catalog vendors, such as Alation, BigID, Collibra and Informatica provide centralized data curation and governance capabilities.

- Data access governance

Role based access control (RBAC) with attribute based access control (ABAC) should be used to reduce the complexity of access control and to apply consistent access policies at scale. User identities are tied to roles, and roles are tied to policies. The policies are further attached to the underlying data elements, their attributes, and even user attributes, such as the data consumer’s geographical location or job title. For example, if a tag for a data element says sensitive or confidential, then the corresponding policy declares which roles have access to that element. Using the combination of tags and policies, fine-grained data access governance can be performed.

But the decentralized nature of data mesh exacerbates the data access issues. First, the catalog needs to extend policy based access control to data products. Second, it has to be aware of the domain location of the product.

Data access governance products, such as Immuta, Okera and Privacera perform universal data authorization.

- Data observability

The final piece in operationalizing a data mesh-based analytics architecture is providing transparency to the internal state of data as it moves from the point of origin to the point of consumption. Data observability products should provide a multi-dimensional view of data, including performance, quality, and its impact on the other components of the stack. Its overall goal is to see how well data supports business requirements and objectives.

Unlike data catalogs, data observability is a newer space, which has attracted many new entrants, such as Acceldata, Bigeye and Monte Carlo.

Data access design should be for multi-persona and ease of use. As data mesh is an approach and not a standard, it doesn’t prescribe the “hows.” This leaves it open to interpretation.

Universal data authorization

Imagine an example where a customer’s name and address are in different domains. This is common in financial services with domains, such as retail banking, wholesale, business banking, lending and leasing, and capital markets. The customer attribute may be called client, account, party, etc. in different domains.

All occurrences of customers should be treated as PII, irrespective of the domains. But, creating separate policies for accessing customer data in each domain is prone to errors and inconsistencies and it is not a scalable option. It is not practical to expect each domain to be aware of the same customer being in other domains, and to track their associated policies.

The centralized governance layer comprises a data catalog, a data access governance product, and a data observability tool. Today, the initial deployments of data mesh architectures are building homegrown applications. However, there are a few data access governance and catalogs that can fill the void and provide state-of-art solutions.

Data access governance products should govern access control to data residing in multiple locations. It does so by centralizing access policies and applying them to the data elements, irrespective of their location. They dynamically apply policies to user identities and use privacy preserving techniques, such as masking, tokenization, and other forms of anonymization.

Data catalogs discover and profile data, and infer business metadata. They also allow access policies to be defined for the data elements and their tags. A universal data authorization product is then used to enforce the policies. This centralized mechanism is needed to provide a comprehensive audit log that is consistent across all data platforms for compliance or root cause analysis reasons.

This architecture ensures that different domains do not end up with inconsistent access policies for the cross-domain data products like customers. The centralized access layer can ensure that data privacy compliance regulations, such as data residency requirements, are being consistently enforced and logged.

As data mesh establishes itself further, the data access governance layer’s performance and scalability capabilities become a key evaluation criteria. Data architects should evaluate products that add as little as possible latency in enabling access to the data products. A successful deployment is one where the authorization platform is invisible to the data consumers browsing the data catalog for data products.

Data stewards create various policies pertaining to their data products. Data stewards reside in domains and in the central team. The former curate data local to the domains, while the latter handle the cross-domain products, and they sit in a centralized team. The data stewards evaluate the universal data authorization product for its ease of use, such as the user experience of using the policy builder to develop policies in a no-code manner using a UI or programmatically through APIs. The policy library then becomes the single source of truth, allowing key policies to be mandated enterprise-wide, while allowing distributed stewardship of domain-specific policies.

One of the most critical elements of the universal data authorization product is that policies are applied dynamically and with each query. Second, the platform deployed to do data mesh governance should provide a wide range of integration with data sources, such as data lakes and cloud data warehouses, and with analytical and data science tools. Extensibility of the platform, through the use of API, is an important consideration as we live in highly fluid analytical architectures, with novel concepts, such as metric stores, semantic layers, and feature stores.

Finally, evaluate the platform that minimizes complexity and does not have strong vendor lock-in.

Conclusion

Will data mesh be the panacea for data related issues in the upcoming years? The naysayers are quick to point out that the issues this approach is addressing, and the proposed principles, are not new. While that is true, data mesh brings a fresh perspective. Data quality has been an ever-present issue which the past approaches have failed to alleviate. Data mesh’s domain emphasis provides another approach. It is trite to say that data scientists spend the majority of their time wrangling data. Data mesh’s attempt to treat data as a product can certainly help.

A few large organizations, such as JPMC, Flexport, and Intuit that have implemented data mesh, are reporting several benefits, such as

- Reducing the time between when consumers request new features and when data engineering teams deliver the functionality

- Fewer ad hoc requests for data on channels such as Slack

- Higher usage of data when it is made available as a product

But, challenges abound too. One of the most common questions is: which organizations should look into data mesh as a future state analytics architecture? The consensus is that data mesh is suitable for organizations that have very large data volumes, and especially if they are spread across various business units. If a business is not facing data engineering bottlenecks, data mesh may not be a suitable approach.

The biggest deterrent to data mesh is its lack of implementation details. No reference architecture exists, and support for tooling from software vendors is limited. As a result, various organizations are deploying their distributed architectures and calling it a data mesh. This can lead to more confusion.

The ultimate challenge is in operationalizing analytics through a consistent data access governance mechanism to the domain and shared data. While no standards have as yet emerged, conventional approaches are not suitable. Companies pursuing data mesh need to introduce universal data authorization into their data stack.